Inactive

Simplifying IT

for a complex world.

Platform partnerships

- AWS

- Google Cloud

- Microsoft

- Salesforce

Artificial Intelligence is no longer limited to cloud servers. Today, intelligent processing is shifting closer to data sources, driving massive growth in AI hardware for edge devices. From smart cameras and autonomous systems to industrial automation and healthcare equipment, edge AI is enabling faster decisions, lower latency, and enhanced data security.

At the core of this evolution is advanced System-on-Chip (SoC) development, which integrates computing, memory, connectivity, and AI acceleration into compact, power-efficient silicon platforms.

AI hardware for edge devices refers to specialized semiconductor solutions designed to perform AI inference and data processing locally, without relying on continuous cloud connectivity.

Common edge AI applications include:

These applications demand low latency, real-time performance, and optimized power consumption — making SoC-based architectures the preferred choice.

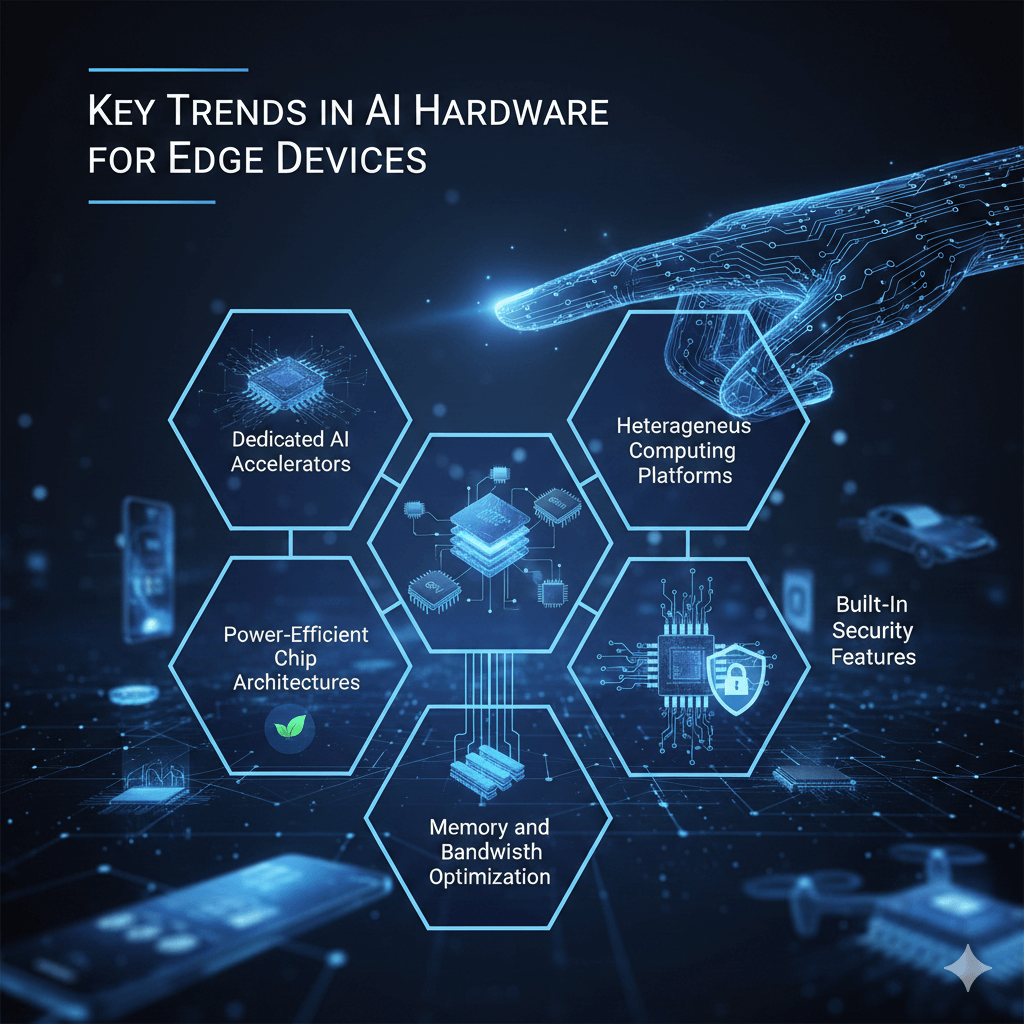

Traditional processors struggle to meet the performance and energy efficiency requirements of AI workloads at the edge. Modern SoC development addresses these challenges by integrating multiple processing engines into a single chip.

Key advantages of AI-enabled SoCs include:

By combining CPUs, GPUs, NPUs, and dedicated accelerators, SoCs deliver scalable performance tailored for edge environments.

Modern SoCs now include specialized Neural Processing Units (NPUs) to handle deep learning inference. These accelerators significantly improve performance while reducing energy consumption, making them ideal for real-time applications such as facial recognition and object detection.

Energy efficiency is a top priority for edge AI hardware. Semiconductor designers are implementing:

These innovations help maximize performance per watt while extending device battery life.

Edge AI workloads benefit from heterogeneous SoC architectures that combine:

This approach enables better task distribution and ensures real-time performance across multiple workloads.

High-speed data movement is critical for AI performance. Modern AI hardware for edge devices is focusing on:

These enhancements improve throughput and reduce dependency on external memory.

Security is becoming a core requirement in edge AI applications. Today’s SoCs include hardware-level protection such as:

These features help protect sensitive data and ensure system integrity in connected environments.

Despite rapid advancements, several challenges remain:

To address these challenges, semiconductor companies are adopting IP reuse strategies, modular SoC architectures, and advanced verification workflows.

The next generation of edge AI hardware will focus on:

These innovations will further accelerate adoption across automotive, healthcare, smart cities, and industrial automation sectors.

As demand for AI hardware for edge devices continues to grow, companies need reliable semiconductor partners with deep expertise in SoC design, verification, validation, and post-silicon support.

Silicon Patterns offers end-to-end semiconductor engineering services that help organizations accelerate edge AI product development. With strong capabilities in design verification, physical design, DFT, validation, and system-level testing, Silicon Patterns enables faster time-to-market and high-quality silicon outcomes.

Whether you are developing AI-enabled SoCs for automotive, industrial IoT, or consumer electronics, Silicon Patterns provides scalable, cost-effective, and performance-driven solutions tailored to your business needs.

Empower your edge AI innovation with Silicon Patterns — where silicon meets intelligence.

From ASIC architecture to GDSII tape-out — talk to our engineering team today.

Let’s Build Your Next Chip Together.